Overall Architecture of DivE

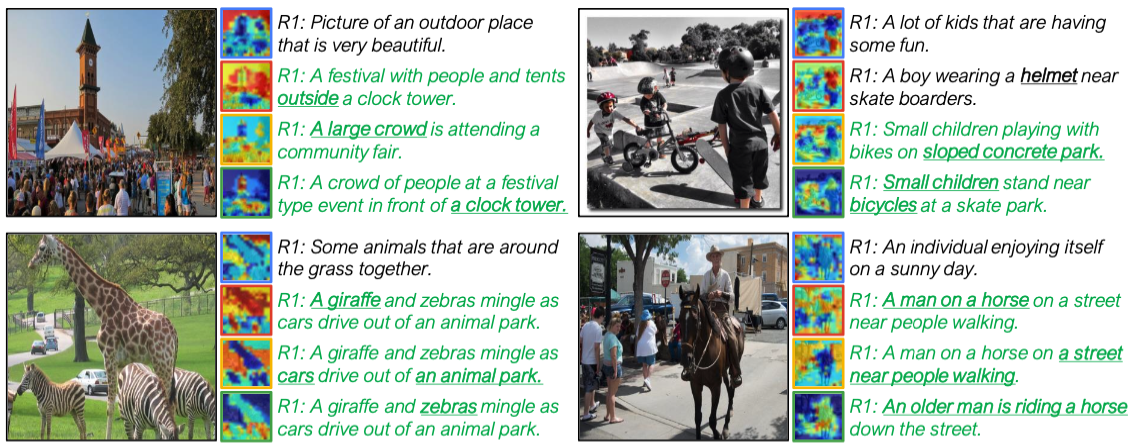

Figure 1. An overview of our model. (a) The overall framework of our model. The model consists of three parts: visual feature extractor, textual feature extractor, and set-prediction modules $f^V$ and $f^T$. First, the feature extractors of each modality extract local and global features from input samples. Then, the features are fed to the set prediction modules to produce embedding sets $S^V$ and $S^T$. The model is trained with the loss using our smooth-Chamfer similarity. (b) Details of our set prediction module and attention maps that slots of each iteration produce. A set prediction module consists of multiple aggregation blocks that share their weights. Note that $f^V$ and $f^T$ have the same model architecture.